Artificial intelligence has transformed what’s possible in mortgage fraud, and not in a good way. The same technology powering productivity tools and automation across the industry is now being weaponized to fabricate documents, clone identities, and intercept transactions at a scale and sophistication that legacy detection systems were never built to handle.

The challenge for lenders is no longer just identifying fraud. It’s keeping pace with an adversary that is evolving faster than traditional controls can respond, and now, it’s also about meeting the expectations of regulators who are watching closely.

The Regulatory Stakes Just Got Higher

On April 13, 2026, Fannie Mae released new AI governance guidelines that take effect August 6 for all sellers and servicers of loans it guarantees, joining Freddie Mac, which implemented similar requirements in March. The message from the GSEs is unambiguous: AI use in mortgage lending must be safe, legal, ethical, and aligned with agency expectations.

The new Fannie Mae requirements are sweeping. Lenders must establish internal AI governance policies, designate an oversight official responsible for annual compliance reviews, deliver regular AI training to loan officers and relevant staff, and demonstrate active risk management aligned with their own tolerance levels. Fannie Mae’s guidance puts it plainly: “The pace of innovation brings heightened responsibility. As AI/ML models grow more complex and more deeply embedded in critical processes, seller/servicers must ensure these technologies are deployed safely, legally, ethically and in alignment with Fannie Mae’s expectations.”

Perhaps most significantly for the vendor community, Fannie Mae explicitly states that lenders will be held responsible for noncompliant AI use by their subcontractors and vendors and mandates appropriate supervision of those providers. This is not a future risk. It is a current compliance obligation with a hard effective date, and lenders who have not yet taken stock of how AI is being used across their operations, including by the partners and vendors they rely on, are already behind.

The AI Fraud Threat Is Already Here

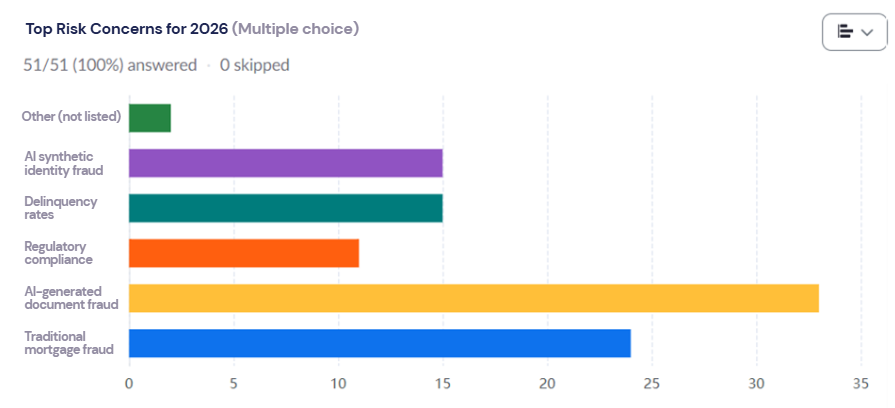

The regulatory urgency reflects a fraud environment that has already reached alarming levels. In a recent Indecomm webinar, “QC as an Early Warning System,” featuring GenWay’s Chief Risk and Compliance Officer Alicia Gazotti,” fifty-one mortgage QC professionals were asked to identify their top risk concerns for 2026. The results were striking.

AI-generated document fraud ranked as the single highest concern by a wide margin, with traditional mortgage fraud and AI synthetic identity fraud close behind. Regulatory compliance and rising delinquency rates rounded out the top five. For those of us who have been watching the industry for a while, the results were not surprising, but they were clarifying. AI-driven fraud is not an emerging concern on the horizon. It is the defining risk concern of right now.

Generative AI could drive U.S. fraud losses to $40 billion by 2027, growing at 32% annually. According to Cotality’s 2026 data, 1 in 118 mortgage applications already shows fraud indicators. Behind those numbers is a new generation of AI-driven fraud that every lender needs to understand.

Generative AI can now produce documents that are virtually indistinguishable from legitimate documents, defeating human reviewers and legacy fraud systems alike.

As Alicia Gazotti, Chief Risk and Compliance Officer at GenWay Home Mortgage, put it during a recent Indecomm Fireside Chat:

“AI-generated documents – they have the ability to produce pay stubs, W-2s, bank statements, all sorts of documentation, and it’s really become more difficult for humans to detect nowadays. Agencies are finding different ways to use their AI to detect fraud and using it as a countermeasure to combat mortgage fraud. So they’re a little bit ahead of the game”

Fraudsters are pairing these fabricated documents with shell LLCs and fictitious business entities to create a seemingly credible paper trail, making income fraud harder than ever to catch at the origination stage.

Beyond documents, AI is being used to clone audio and video calls, impersonate borrowers and lender staff, and construct synthetic identities by blending real and fabricated personal data. These impersonations are then used to authorize wire transfers, bypass identity verification, and misdirect funds, often before anyone realizes something is wrong. Deepfake technology has made AI-assisted wire fraud one of the most financially devastating threats in real estate transactions today, with funds that are not secured within the first 24 hours unlikely to ever be recovered.

Agencies have taken notice. They are now deploying their own AI post-closing to detect document fraud, which means lenders whose QC programs are not built to catch AI-generated fraud pre-funding face not just financial losses, but growing repurchase risk and the very compliance exposure the new GSE guidelines are designed to address.

Brian Margulies, Vice President of Operations at Indecomm Global Services, sees it firsthand:

“The old days, you could kind of tell if someone was cut and pasting: the numbers don’t add up, the font’s crooked, you could eyeball some of those. Now, with what can be generated, having a robust QC program, particularly re-verifying information on the front end during origination, is critical.”